Last week I spent some time redesigning a SSAS multidimensional cube and part of that effort involved re-partitioning the measure group by customer (instead of date) and redistributing the partitions across a new set of drives. Initial testing shows serious performance improvement (which I plan to blog about in a bit more detail in the next few weeks) but today I wanted to focus on a neat trick that helped me recover from a minor boo-boo when getting through the validation tests.

Background

The setup for this particular client is a multi-tenant SSAS cube (~250GB) used to support a specific set of dashboard queries…while there are only ~25 base queries, we’re executing >100k distinct versions based on different parameters (e.g. TenantID, StartDate, EndDate, etc) over the weekends and storing the results in a table which is then used as the source for displaying results on the dashboards. The mechanism for executing the queries is a .Net application created by one of the developers which pulls the base MDX, replaces the parameter values, and executes the final MDX across a load balanced cluster of 7 read-only SSAS query servers.

In order to validate the new design (i.e. ensure that it is producing the same results) we are going to process both versions of the cube with the same set of data. However, as we are limited in drive space, we cannot fit both versions of the cube on our query servers. Therefore our test plan looks like this…

- Process Old-Cube on SSAS-Processing server

- Push Old-Cube to SSAS-Query servers

- Start .Net application to run workload against Old-Cube (currently takes all weekend)

- Deploy New-Cube to SSAS-Processing server and process(At this point, both cubes have been processed using the same underlying data. And once the query-workload completes for Old-Cube we can proceed with…)

- Push New-Cube to SSAS-Query servers

- Start .Net application to run workload against New-Cube

- Compare stored results between each run

Problem

Got the plan, working through the steps and just as the New-Cube finishes processing on the SSAS-Processing server, I realized I had made a small boo-boo.

Apparently, while writing the script to re-partition the SSAS-database, I’d completely forgotten to relocate one of the measure groups off of the old drives over to the new ones. Now, even though this was a really really small measure group (not even partitioned – which is probably why I forgot to address it in the script) it was still an important piece of the plan since we also wanted to reduce our footprint on the SAN to free up some drives…which we couldn’t do until the data had been moved to the new drives.

To make matters worse, because the ETL had already started back up and the underlying data upon which both the old and new cubes were processed had changed I couldn’t afford to have to reprocess the New-Cube without screwing up the validation test. Bottom line, I needed a way to move a partition that had already been processed to a new drive … and I needed to do it without un-processing partition.

A quick bit of testing proved that the alter-partition XMLA command would result in unprocessing the partition – which I obviously can’t afford. And so it was looking likely that I was going to have to eat my mistake and move the measure group after the load/validation test (which would mean holding off on freeing up the drive space for several more days)…not a big deal in the grand scheme of things, but unfortunate nonetheless.

Thankfully, before giving up, I stumbled upon a great workaround posted by Dave Bond on this connect item. The comments tab will give you an idea of why this capability (i.e. to move processed partitions to new storage locations without having to reprocess them) is important…think: really LARGE partitions that take a long time to reprocess with very small downtime windows.

How to Move A Cube Partition Without Unprocessing It

In order to avoid having to reprocess a partition after moving it to a new storage location, we can create a duplicate partition with the new storage location, process it, merge in the old partition, drop the old partition, and rename the new partition. The rest of this post walks you through an example with screenshots…

Below is a screenshot of the partition we would like to move. As you can see, it was last processed on 1/17/2015 and currently resides in the default storage location. As part of our performance tuning, we would like to move this partition to a new location (i.e. D:\SSAS-Data-New\) …but we want to accomplish this without having to reprocess the data.

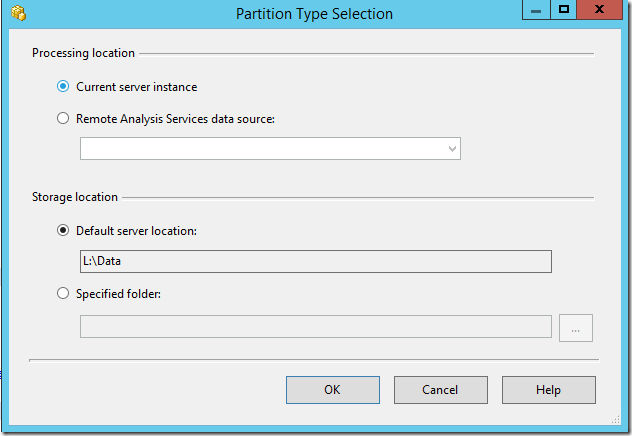

Note: be sure to update the AllowedBrowsingFolders server-level configuration property with the new target location for the partition – otherwise you will be unable to create the partition.

Right-click the partition you want to relocate and select Script Partition as… | CREATE To | …New Query Editor Window. Then update the XML…change the storage location, ID, and name values…Also, don’t forget to update the source query adding a filter so that no rows are processed. If you forget to do this, you will have duplicate data in the partition after the merge which is very bad since it will lead to inaccurate results for reports and analysis.

Note: If the partition you are trying to move is currently using the default storage location, you will need to add the <StorageLocation></StorageLocation> tag to the XML.

The result is a new unprocessed partition…

Now we can process this partition which should be lightening fast since the source query doesn’t actually produce any results to be processed.

<Batch xmlns="http://schemas.microsoft.com/analysisservices/2003/engine"> <Parallel> <Process xmlns:xsd="http://www.w3.org/2001/XMLSchema" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xmlns:ddl2="http://schemas.microsoft.com/analysisservices/2003/engine/2" xmlns:ddl2_2="http://schemas.microsoft.com/analysisservices/2003/engine/2/2" xmlns:ddl100_100="http://schemas.microsoft.com/analysisservices/2008/engine/100/100" xmlns:ddl200="http://schemas.microsoft.com/analysisservices/2010/engine/200" xmlns:ddl200_200="http://schemas.microsoft.com/analysisservices/2010/engine/200/200" xmlns:ddl300="http://schemas.microsoft.com/analysisservices/2011/engine/300" xmlns:ddl300_300="http://schemas.microsoft.com/analysisservices/2011/engine/300/300"> <Object> <DatabaseID>AdventureWorksDW</DatabaseID> <CubeID>Adventure Works DW</CubeID> <MeasureGroupID>Internet Sales</MeasureGroupID> <PartitionID>INTERNET CUSTOMERS 000000 new</PartitionID> </Object> <Type>ProcessFull</Type> <WriteBackTableCreation>UseExisting</WriteBackTableCreation> </Process> </Parallel> </Batch>

Next we need to merge the old partition (i.e. INTERNET CUSTOMERS 000000) into the new partition (i.e. INTERNET CUSTOMERS 000000 new).

<MergePartitions xmlns="http://schemas.microsoft.com/analysisservices/2003/engine"> <Sources> <Source> <DatabaseID>AdventureWorksDW</DatabaseID> <CubeID>Adventure Works DW</CubeID> <MeasureGroupID>Internet Sales</MeasureGroupID> <PartitionID>INTERNET CUSTOMERS 000000</PartitionID> </Source> </Sources> <Target> <DatabaseID>AdventureWorksDW</DatabaseID> <CubeID>Adventure Works DW</CubeID> <MeasureGroupID>Internet Sales</MeasureGroupID> <PartitionID>INTERNET CUSTOMERS 000000 new</PartitionID> </Target> </MergePartitions>

The result is that the original partition is gone and all we’re left with is the new partition…which is fully processed and contains all the data.

At this point, we need to update the source query to remove the bogus WHERE-clause predicate so that subsequent processing operations do actually return data from the source)…and we can also change the partition name to fit with the naming convention. However, the partition ID can’t be changed – so if it is important to have the same partition ID (and it might be depending on outside dependencies) you will want to walk to follow the same process as before…only this time you won’t change the storage location ![]()

<Alter ObjectExpansion="ExpandFull" xmlns="http://schemas.microsoft.com/analysisservices/2003/engine"> <Object> <DatabaseID>AdventureWorksDW</DatabaseID> <CubeID>Adventure Works DW</CubeID> <MeasureGroupID>Internet Sales</MeasureGroupID> <PartitionID>INTERNET CUSTOMERS 000000 new</PartitionID> </Object> <ObjectDefinition> <Partition xmlns:xsd="http://www.w3.org/2001/XMLSchema" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xmlns:ddl2="http://schemas.microsoft.com/analysisservices/2003/engine/2" xmlns:ddl2_2="http://schemas.microsoft.com/analysisservices/2003/engine/2/2" xmlns:ddl100_100="http://schemas.microsoft.com/analysisservices/2008/engine/100/100" xmlns:ddl200="http://schemas.microsoft.com/analysisservices/2010/engine/200" xmlns:ddl200_200="http://schemas.microsoft.com/analysisservices/2010/engine/200/200" xmlns:ddl300="http://schemas.microsoft.com/analysisservices/2011/engine/300" xmlns:ddl300_300="http://schemas.microsoft.com/analysisservices/2011/engine/300/300" xmlns:ddl400="http://schemas.microsoft.com/analysisservices/2012/engine/400" xmlns:ddl400_400="http://schemas.microsoft.com/analysisservices/2012/engine/400/400"> <ID>INTERNET CUSTOMERS 000000 new</ID> <Name>INTERNET CUSTOMERS 000000</Name> <Source xsi:type="QueryBinding"> <DataSourceID>Adventure Works DW</DataSourceID> <QueryDefinition>SELECT * FROM [dbo].[FactInternetSalesBig] WHERE OrderDateKey < 20100101</QueryDefinition> </Source> <StorageMode>Molap</StorageMode> <ProcessingMode>Regular</ProcessingMode> <StorageLocation>D:\SSAS-Data-New</StorageLocation> <ProactiveCaching> <SilenceInterval>-PT1S</SilenceInterval> <Latency>-PT1S</Latency> <SilenceOverrideInterval>-PT1S</SilenceOverrideInterval> <ForceRebuildInterval>-PT1S</ForceRebuildInterval> <Source xsi:type="ProactiveCachingInheritedBinding" /> </ProactiveCaching> </Partition> </ObjectDefinition> </Alter>

Obviously you’ll want to script this solution in .NET or PowerShell if you need to move many partitions. Either way, I hope this helps those who find themselves in a similar predicament.

One reply on “Moving a Cube Partition Without Unprocessing It”

Is there a way to do in tabular?

LikeLike